Twitter investigates as users point out alleged AI bias against women & people of colour

A Twitter user discovered that the image preview and cropping algorithm on the social media app was so biased that it picked white faces instead of black faces.

- Tech News

- 4 min read

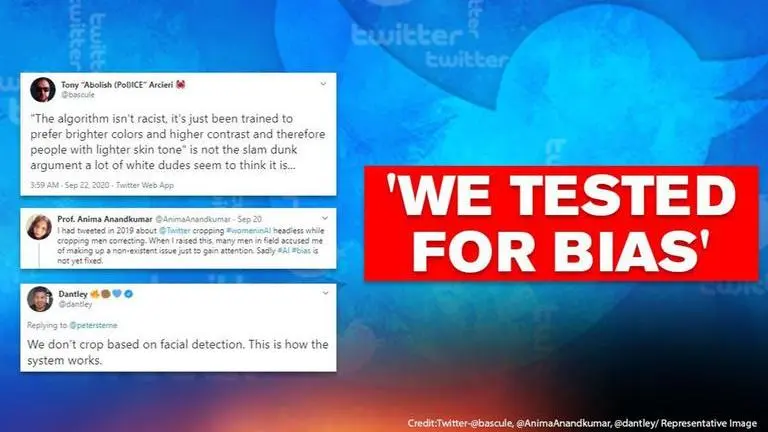

A Twitter user had alleged that he had discovered that the image preview and cropping algorithm on the social media app was biased and picked white faces instead of black faces. The user tweeted several experimental pictures to prove his claims while sharing that professor Anima Anandkumar, who is the director of AI research at nVidia, was the first one to point out the bias in 2019. He also suggested people follow Joy Buolamwini who is the founder of Algorithmic Justice League.

Joy's organisation focuses on raising awareness about the impact of Artificial Intelligence and believes that 'AI systems can amplify racism, sexism, ableism, and other forms of discrimination'. The Twitter user named another activist and book writer, Ruha Benjamin, who speaks about racism in technology through her book 'Race After Technology'.

While responding to the user's experiment and discovery of racial bias, Twitter said, "We tested for bias before shipping the model & didn't find evidence of racial or gender bias in our testing. But it’s clear that we’ve got more analysis to do. We'll continue to share what we learn, what actions we take, & will open-source it so others can review and replicate."

Twitter's Chief Design Officer, Dantley Davis also responded to the controversy in an attempt to explain the situation.

Advertisement

Here's another example of what I've experimented with. It's not a scientific test as it's an isolated example, but it points to some variables that we need to look into. Both men now have the same suits and I covered their hands. We're still investigating the NN. pic.twitter.com/06BhFgDkyA

— Dantley 🔥✊🏾💙 (@dantley) September 20, 2020

User calls Twitter Biased

In order to understand the racial bias in Twitter algorithms, a user posted a picture of Mitch McConnell and Barack Obama. In the image preview, the algorithm picked the picture of McConnell instead of former US President Obama. The user further shared another picture of the two in order to understand if the reason behind the image preview could be the 'colour of the tie'. He first posted a picture of Mitch McConnell in a red tie and Barack Obama in blue which he later reversed using a picture of Obama in red tie and McConnell in blue but the results remained the same.

Advertisement

Twitter user Tony then shed light on the racial bias by sharing a study published in Nature which examines the use of software and the algorithms which are widely used in US hospitals to allocate healthcare to people. The study points out that the use of this software leads to racial bias and discrimination against people of colour. In another tweet, the user pointed out that all the people who attributed the racial bias tp 'contrast' by editing the pictures and raising the contrast on Barack Obama and decreasing it on Mitch McConnell's pictures are completely missing out on the point and the core problem.

Several people have attributed the issue to contrast, dramatically raising the contrast on Obama and decreasing it until McConnell is a dull grey.

— Tony “Abolish (Pol)ICE” Arcieri 🦀 (@bascule) September 21, 2020

While this may get the algorithm to select the other image, it doesn't address the core problem.https://t.co/JvUXUGukBM

These images in particular, comparing low contrast to high contrast Obama, are much more telling. The algorithm seems to prefer a lighter Obama over a darker one:https://t.co/8EAYnYcRs3

— Tony “Abolish (Pol)ICE” Arcieri 🦀 (@bascule) September 21, 2020

Trying a horrible experiment...

— Tony “Abolish (Pol)ICE” Arcieri 🦀 (@bascule) September 19, 2020

Which will the Twitter algorithm pick: Mitch McConnell or Barack Obama? pic.twitter.com/bR1GRyCkia

This experimental post came soon after a University manager from Vancouver, Colin Madland was helping out a faculty who faced a problem with video calling app Zoom which made the head of the man disappear during calls. Colin claimed that the facial detection algorithm of the app was so bad that it erases black faces. The face of the man was only visible when a pale globe was placed in the background. Tweeting about it, Colin Madland said, "Turns out @zoom_us has a crappy face-detection algorithm that erases black faces...and determines that a nice pale globe in the background must be a better face than what should be obvious."

INVISIBLE MAN. @zoom_us virtual background amputates a Black colleague’s head until he places a pale globe behind.😶

— Ruha Benjamin (@ruha9) September 19, 2020

[photos by @colinmadland shared with permission] pic.twitter.com/Y6jYFfVlOD

Earlier in 2019, Professor Anima Anandkumar had pointed out that Twitter AI is gender-biased because when a picture of men and women is tweeted it simply puts the focus on men instead of the women and often crops out the face of women in the image preview. Following this, several users posted similar pictures and collages as well as screenshots to prove that gender bias is real till date. There were other Twitter users who called it AI misogyny while pointing our the bias against women and people of colour.